Tech-authoritarian gaslighting

A STEM-kid conception of democracy inverts its meaning

(Part one of this post can be found here.)

“All lawful use”

To understand Story 2, you must first understand Pete Hegseth. Again, I will go with a short but honest description. Hegseth is “a grifter, a narcissist, a man of petty resentments, and an abuser of his power.” He has previously been fired for financial mismanagement, inappropriate sexual behavior, and being drunk on the job—an impressive variety of assholery.

But Pete is one thing most of all: a war crimes enthusiast. Unabashed support for illegal violence on defenseless people is literally his trademark political opinion.

Hegseth rose to fame through televised lobbying for clemency for U.S. soldiers convicted of war crimes.1 Upon becoming defense secretary, one of his first acts was to fire the top lawyers in the JAG Corps, who were in charge of investigating war crimes. In September, he gave orders to kill unarmed survivors of a military strike as they clung to the wreckage of their ship—which is unequivocally a war crime.2 He has stated many times that he rejects the very concept war crimes, in that he doesn’t believe the things we call war crimes should be illegal.

All of that is to say that Pete Hegseth’s conception of “lawful use” of military tools is extraordinarily broad. The possibility that he might, say, use autonomous weapons even if they were unreliable and killed many civilians could not possibly require less imagination.3

With that context in mind, I’ll quote Jasmine Sun for the recap of this week’s incident:

In July last year, Anthropic signed a $200 million contract with the Pentagon to provide access to Claude…The company was eager to cooperate with the US military, even partnering with Palantir. But when Claude was used for the January capture of Nicolas Maduro, that allegedly miffed an employee inside Anthropic, which got leaked back to the Pentagon. A pissed-off Pete Hegseth wanted to make super sure that Anthropic was down for anything he wanted, citing “all lawful uses”—which under US military law, means basically whatever. And that was where things got messy.

The thing is, Anthropic’s original DoW contract included two exceptions for military use: their AI could not be used for domestic mass surveillance or fully autonomous weapons. But Hegseth ignored this, demanding that the Pentagon retain full discretion over how they use Claude. When Anthropic said no, he threatened to designate Anthropic a “supply chain risk”: a highest-tier national security designation usually reserved for companies like Huawei run by foreign adversaries. (Even Tencent and DeepSeek are not tarred with this label.)…

Days passed while Hegseth’s ultimatum hung in the air. Then, on Thursday, Dario Amodei published a statement: “These threats do not change our position: we cannot in good conscience accede to their request.” The AI community praised his courage. For a moment, there was celebration.

Well, Secretary Hegseth was not bluffing. He moved ahead with designating Anthropic a supply chain risk. In a long and dumb tweet, he calls the company’s behavior a “master class in arrogance and betrayal” and “a cowardly act of corporate virtue-signaling that places Silicon Valley ideology above American lives.”

Within hours, OpenAI swooped in to accept Hegseth’s terms of “all lawful use” of their AI models, despite Sam Altman’s prior promise to hold the same red lines as Anthropic. They followed this announcement with a bunch of mealy-mouthed bullshit pretending they’d held those redlines through non-contractual means.4 Thankfully, Altman’s integrity is so tarnished by now that very few people believe that; I’ll say more in the last section.

There are layers and sublayers of bullshit here that it’s difficult to unpack one at a time. I’ll give it a sporting effort, but some signposting is in order. I’ll go from most to least absurd: from the administration’s position, to those justifying it with appeals to democracy, to the “libertarians” justifying it, to those defending OpenAI. Scroll to your level of interest.

Here are eight reasons Pete Hegseth is full of shit:

1. There is nothing whatsoever wrong with a private company having moral standards more stringent than those that our increasingly dysfunctional Congress has been able to pass into law.

For example, mass surveillance of Americans through commercially available data is probably currently legal. That doesn’t make it good, or in line with the spirit of a Constitution written long before such surveillance was technically feasible. Advanced AI could make such surveillance especially powerful and dangerous in ways the law hasn’t caught up to yet—in no small part because David Sacks and tech-right SuperPACs are doing everything they can to prevent the law from catching up.

2. Even if our moral standards go no further than the law, it is perfectly reasonable to suspect that the current Administration may not scrupulously adhere to it. In fact, it is terribly naive to expect they will.

One time the Navy sprayed bacteria over the city of San Francisco to see how many people they could infect. The Air Force bombed Cambodia, then incinerated the records so that Congress wouldn’t find out. Reagan secretly sold arms to Iran so that we could fund a rebel group in Nicaragua. The CIA gave people LSD without their knowledge just to see what would happen.

In some NatSec circles, laws are mere indicators of how much you’ll be scolded if you’re caught. This was the state of play before Donald Trump was elected.

“The illegal we do immediately; the unconstitutional takes a little longer.” – Henry Kissinger

The trouble with “all lawful use” is that Presidents generally, and the current President especially, demonstrate flagrant disdain for the law, including the particular areas of law that this dispute is about. Can we trust Pete Hegseth’ Donald Trump, and even future presidents to use these powerful tools in a morally responsible way—or even, in a way aligned with the wishes of the American people? The resounding historical answer is no.

3. The Pentagon already agreed to these terms last July.

The Department of War is free to do business with whoever it pleases, or to renegotiate at the end of the contract. But it is odd and unfair to break the terms of a contract it agreed to midway through, all because Pete Hegseth had a hissy fit about a random employee not liking the Venezuela coup.

4. It’s very concerning that the Pentagon refuses these terms now.

If the Pentagon refuses to do business (and in fact, incurs significant costs to break contracts) with companies that won’t help it mass-spy on Americans or set loose autonomous killbots, that is a concerning premonition of the Pentagon’s plans. Indeed, recent reporting has indicated that the Pentagon did want to use Claude to analyze bulk data collected from Americans. This is the Snowden NSA scandal on steroids, supercharged by better technology. That it’s such a footnote in this weekend’s news cycle is terrifying, and far worse than anything Anthropic has done.

5. The “supply chain risk” designation is illegal and absurd.

This is the big one. The designation was created to guard against foreign adversaries inserting spyware or defective parts into U.S. military equipment. It has never before been applied to an American company, let alone over a principled disagreement about the terms of a contract.

Such a designation is simply illegal. Even people who say it is legal cannot pretend it’s a good faith assessment of risks Anthropic poses to the U.S. supply chain. It is a transparent pretext for Hegseth’s urge to punish the company by any available means. If you think it is good and okay for the military to arbitrarily weaponize the law to punish whoever annoys Pete Hegseth, I’m afraid your values and judgment are beyond repair.

6. Even if it were valid to designate Anthropic a supply chain risk, this would not empower Hegseth to do what he’s trying to do.

Hegseth’s tweet claimed that because of the designation:

“Effective immediately, no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic.” (emphasis added)

But “any commercial activity” is not what a supply chain risk designation prohibits. As Anthropic explains, a designation under “10 USC 3252 can only extend to the use of Claude as part of Department of War contracts—it cannot affect how contractors use Claude to serve other customers.”

Again, the legal argument from Anthropic is simply airtight. It is open-and-shut obvious that Hegseth is going beyond his legal authority, in the very same statement as he makes such pious appeal to the law.

7. If Anthropic were truly a supply chain risk, it is incoherent for the Department of War to keep working with them for six more months.

As Dean Ball put it, “You’re telling everyone else who supplies to the DOD you cannot use Anthropic’s models, while also saying that the DOD must use Anthropic’s models for at least six months.” Claude can be either a national security risk, or essential to national security—it cannot be both.

8. Arbitrarily attempting to destroy a $350 billion company is very bad for the AI industry, the whole economy, the U.S. military, and the race with China.

One result of this strongarming is a destabilized business environment. The risks and costs of investing in American AI companies are now higher, from recognition that at any time, the administration could crush the companies involved on a whim.5 That the White House has no legal authority to do this may not matter. When Huawei was designated, it’s U.S. enterprise business collapsed within 18 months.6

It is crystal clear that this confrontation was driven by Pete Hegseth’s authoritarian impulse; by his ego, his habits of chest-puffing and vice-signaling, and his pathological desire to bully into submission anyone with a conscience more active than his. Sun nails it: “This has nothing to do with national security or antiwokeness or anything like that. It is about striking fear into the hearts of any person or company…who dares cross the admin. It is rule by fear and deterrence and chilling effect.”

The sole intent of designating Anthropic a supply chain risk was to frighten other companies away from doing business with them, and from resisting the regime in general, for fear that the White House might punish them too. In other words:

Gaslighting about democracy

If you read Part I of this post, you’ll understand my exasperation with the commonest argument defending Hegseth. If the tech right is to be believed, it all comes down to their heartfelt convictions on a familiar question: “who decides?”

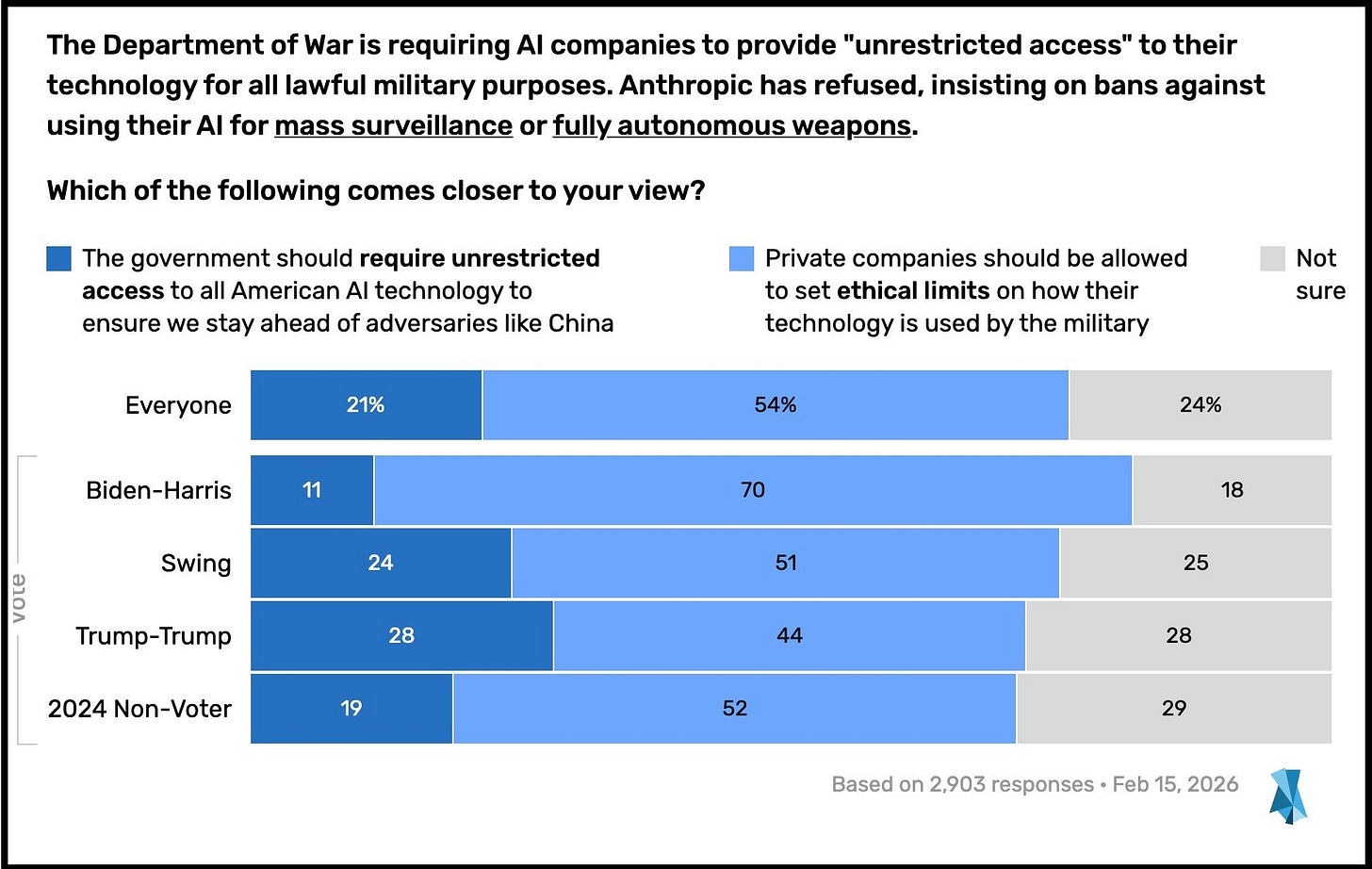

Hegseth’s own tweet was so full of lies that the argument was almost buried;7 but he does complain that military policy must be determined by “the American people,” not “unelected tech executives.” Absent was any mention of what the American people think on the issue:

Anduril’s Palmer Luckey wrote probably the steelman:

Do you believe in democracy? Should our military be regulated by our elected leaders, or corporate executives?…

[Describes a lot of difficult judgment calls that militaries have to make regarding technology, surveillance, and the fog of war.]

At the end of the day, you have to believe that the American experiment is still ongoing, that people have the right to elect and unelect the authorities making these decisions”

It is one thing to hear this from the administration, which has defined the law as “whatever Trump says” since the day he took power. But it’s especially rich to hear this argument from the very same “unelected tech executives” whose appointees and SuperPAC’s are actively stiff-arming Americans’ ability to democratically decide things.8 Hours after caving to Pete Hegseth’s demands, Sam Altman did an AMA, then summarized it with this takeaway:

“There is more open debate than I thought there would be, at least in this part of Twitter, about whether we should prefer a democratically elected government or unelected private companies to have more power. I guess this is something people disagree on, but…I don’t. This seems like an important area for more discussion.”

Set aside, for now, that this argument contradicts Altman’s own claim to be defending redlines against domestic spying and killbots, regardless of whether they are wanted by an elected government. Let’s just recap what the people singing from this song sheet have done in the past three months alone:

Threaten to illegally withhold BEAD funding from states that pass popular AI laws (and pumped hundreds of millions of dollars into SuperPACs to fight such laws)

Ignore the 130-day legal limit on David Sacks’ tenure, so that he can police state AI laws without Senate confirmation

Illegally designate Anthropic a supply chain risk, and illegally broaden that designation to bar all commercial activity with Anthropic

Get caught ordering war crimes

Illegally attack Iran and Venezuela without Congressional authorization

All five of those things are both illegal and wildly unpopular. To justify them with appeals to democracy and the rule of law can only result from profound confusion or dishonesty about what those words mean.

^My response to Luckey

Let me be charitable and assume confusion. In that case, the Silicon Valley crowd is so clueless on political theory that they think “Trump was elected” is where democracy ends. They ignore the procedural and institutional ingredients needed for democracy to have moral weight and practical effect.

If these people had a more genuine interest in democratic legitimacy, they would understand it as a concept that exists in degrees, based on such factors as the popularity of the government’s action; whether the government campaigned on the action; the legality and constitutionality of the action; how many branches of government had oversight of the action; whether concentrated interests had disproportionate influence; the amount and quality of public and legislative debate; and many more.9

The tech-authoritarian playbook is to conflate the law with decrees from the (buyable) Emperor, then toss aside everything—federalism, checks and balances, the consent of the governed—that gives laws democratic legitimacy in the first place. This is the theme that unites my two stories. Such a juvenile understanding of democracy inverts its meaning from the diffusion of power (rule by the people) to the concentration of power (rule by the bullying Czar).

The grown-up belief in democracy is one where decisions like these are made through broad and ongoing popular input, bounded by constitutional guardrails. For example, whether our country goes to war with Iran should be determined by public debate between elected representatives on that specific issue, followed by a vote on that specific issue.

Whether we impose tariffs on foreign imports; whether ICE engages in mass surveillance; whether it’s kosher to kill shipwrecked survivors of a military strike; and whether we place basic transparency requirements on tech companies should be determined by similar processes, with heavy oversight from the courts and Congress. I’m old enough to remember when they were.

What prevents such democratic deliberation from deciding things today is certainly not that private companies are requesting too many conditions in their contracts with government agencies. What prevents it is the style of government that unites my stories: intimidation tactics, loyalty tests, and illegal strongarming to consolidate power in a single office. That’s why state legislatures are afraid to pass AI laws that 90% of their constituents support, and why Anthropic was designated a supply chain risk.10

Gaslighting about liberty

Given this unprecedented abuse of government authority against a leading AI innovator for the crime of keeping to a signed contract, you’d be forgiven for assuming that our free-market friends were up in arms. But would you really be shocked to hear it’s the opposite?11

Because this is my personal hell, the tech right—from Peter Theil to Elon Musk to Marc Andreessen to David Sacks to Neil Chilson—still calls itself libertarian, even as they lapdog for an authoritarian regime. Nothing unites today’s Silicon Valley libertarians more than their hunger for unchecked political power.

Andreessen’s Techno-Optimist Manifesto includes grave warnings about hypothetical scenarios in which regulation could enable Orwellian government power. To avoid that outcome, he and his friends now clap and cheer while the government wields Orwellian power—to include the literal tech-enabled mass surveillance that Orwell wrote about in 1984!

At most, the tech right is libertarian only in its preferred policy outputs. It lacks the faintest trace of reflection or careful study on which political processes are most conducive to those outputs over the long term. Liberty is reduced to “whatever is best for big tech companies,” which in our current environment is assumed to be “whatever maximizes and centralizes the power of the federal government,” without any apparent awareness of why that’s opposite day.

Such empty ideology makes very thin cover for the profit motive and tribal biases that actually dictate these people’s politics.12 It also validates the most caricaturized depictions of libertarianism from the progressive left. They are simply billionaire stooges, who confuse their emotional irritation at the blue tribe with an old and admirable intellectual tradition.

Sadly, OpenAI

Well-meaning people in my AI policy circles have spent the past few days diving deep down the rabbit hole of OpenAI’s agreement with the Pentagon. There is nuance to the question of which redlines are worth defending and how. Contract law is complicated, and so are the technical details of the “safety stack.” And because they are careful and curious thinkers (and want not to burn bridges with lab employees), their instinct is to set aside the political spectacle of it all and dive into those details with an open mind. Or at least, to withhold judgment until the full contract is public.

It’s important that some people do that. It’s even possible that OpenAI got the best terms it could with the current DoW. Insofar as those terms are still evolving, we should press to make them as good as possible.

But just because that’s the most debatable part of the story does not make it the main story. It’s important not to be too guarded in how we explain the big picture, to which the whole debate about “all lawful use” is secondary.

The main story is that Pete Hegseth tried to bully a frontier AI lab into submission through illegal means. Such behavior is extremely bad for democracy and AI safety. It’s very important for the long-term future—more important, I feel, than the details of a paper shield against AI misuse—that this type of governance be resisted, rather than legitimized or empowered.

The morally relevant criteria by which to judge OpenAI are how they both contributed and responded to that pressure.

Their response was to pounce on the Pentagon’s terms that very night. And their contribution has been massive and ongoing support—financial, rhetorical, and symbolic—for Trump’s Administration, inauguration, and campaign. Their president was Trump’s single largest donor last quarter. Their government affairs team uses cutthroat tactics, and their SuperPAC spends hundreds of millions of dollars to resist the most basic regulatory guardrails. It also enlists dancing monkeys to flood Twitter with trashy lies.

None of this is consistent with the company’s charter, which aspires to “avoid enabling uses of AI or AGI that harm humanity or unduly concentrate power.” So you’ll forgive me for having no sympathy for Altman’s crocodile tears:

“To try so hard to do the right thing and get so absolutely like, personally crushed for it—and I know this is happening to all of you too, so I feel terrible for subjecting you all to this—is really painful.”

At best, the company is treating the rise of an authoritarian regime in the United States as an incidental obstacle to navigate in pursuit of the thing that really matters, which they see as safe AI development. That is not what it is. It is actually a coequal threat to the long-term future as the technical problems of AI alignment, because even aligned AI could so empower the bad guys.

Appropriately balancing these risks would have involved more pushback and solidarity than Sam Altman’s ambition allowed. The best response would have been what 100 of the company’s bravest employees demanded in an open letter: walk away. Stand with Anthropic. Set aside your petty rivalry and form a united front against the illegal intimidation of private enterprise. “If this is how you treat your business partners, we will take our business elsewhere.”13

Whether AI goes well will depend largely on whether it’s controlled by authoritarians. Whether AI is controlled by authoritarians will be determined largely by whether its creators submit to authoritarians. The submission is the story.

It actually started earlier than that. In 2006, Hegseth told his platoon in Iraq to ignore the rules of engagement. His unit was later nicknamed “Kill Company” and had soldiers court-martialed for killing unarmed Iraqis (though I should say that was not Hegseth’s platoon, and it was after Hegseth was reassigned elsewhere). Regardless of his personal culpability, the culture appears to have rubbed off on him.

I want to emphasize how unambiguous this is. His orders were illegal under both the Hague and the Geneva Conventions. They were illegal under the Uniform Code of Military Justice. They are such a textbook example of a war crime that the DoW’s own Law of War Manual gives “orders to fire upon the shipwrecked” as an example of “clearly illegal orders.” The Former JAGs Working Group unanimously concluded that Hegseth’s orders were war crimes, murder, or both.

Nor is Hegseth an aberration in this administration, which routinely flouts the law in brazen ways. Birthright citizenship. Impounding appropriated funds. The mass firing of civil servants. Unilateral tariffs. CECOT. The detention of migrants without trial or due process. Federalizing the National Guard for domestic law enforcement. All illegal, and all from the past year alone.

As Peter Girnus put it, “At this valuation, “responsible” means: we will do the thing the other company refused to do, and we will describe doing it with the same adjective they used to describe not doing it.”

This tweet put it well:

“As of Friday, every single investor in data center infrastructure suddenly lives in a world where the U.S. government feels that it would be acceptable, in one fell swoop, to significantly undermine the entire business model of a particular frontier lab. Congratulations, data centers have now become more expensive to build - for everyone (no, not just Anthropic). This also percolates to the business of every U.S. tech company generally (not limited to AI). Even those who dislike Anthropic need to understand that U.S. businesses, and investors in U.S. businesses, require a climate of business certainty. The U.S. government, by singling out a particular U.S. company in this fashion over a mere contractual disagreement, has *already* done great harm to the U.S. business environment.”

“Procurement lawyers at Fortune 500 companies skip the statute and go straight to the headline. And the headline is that Anthropic just landed on the same list as Huawei. That comparison will do more damage than any legal mechanism.”

Inverting every sentence of Hegseth’s tweet would get you closer to the truth. No, Anthropic did not “attempt to strong-arm the US military into submission” to a contract which the U.S. government had already signed. It has no means do so if it tried. The U.S. military very plainly tried to strong-arm Anthropic. Dario’s stand, at significant risk to his business, against authoritarian threats was the opposite of “cowardly” and the pinnacle of “patriotic,” etc.

There are plenty of tech-right clowns flooding Twitter with this argument too. Nathan Leamer (head of the lobbying wing of the SuperPAC OpenAI funds and coordinates) is gleefully lying as usual. Nic Carter says the U.S. should be more like China, because the alternative to China “is appalling and undemocratic.”

Whether the action was taken pursuant to foundational democratic norms like due process and equal protection; the proximity of the actor to political accountability; whether the action is subject to judicial review; the reversibility of the action by future democratic majorities, etc.

Michael Wood understands “the great story of our time: for all intents and purposes, we live under an elected (for now) monarch who makes tax, spending, and war decisions on his own by executive fiat and completely disregards Congress because he feels confident that no one will hold him to account.”

The exceptions, to their credit, are Adam Thierer and (especially) Dean Ball.

A friend asks: “Imagine if the Biden administration had a contract with xAI. Imagine they told xAI that they did an assessment, and found that Grok disfavors black people in assessing job applications, so they will have to cancel your contract if you don’t fix it. Imagine xAI refused—and then Biden declared them a supply chain risk to destroy the business.

What do you think the tech-right would say? Would it be so deferential to the wisdom of a democratically elected government?”

If OpenAI had said no, Google would have said no. If that had resulted in xAI getting the contract instead, so be it. They would be the outlier for their complicty, instead of Anthropic the outlier for its principle, and that would matter.

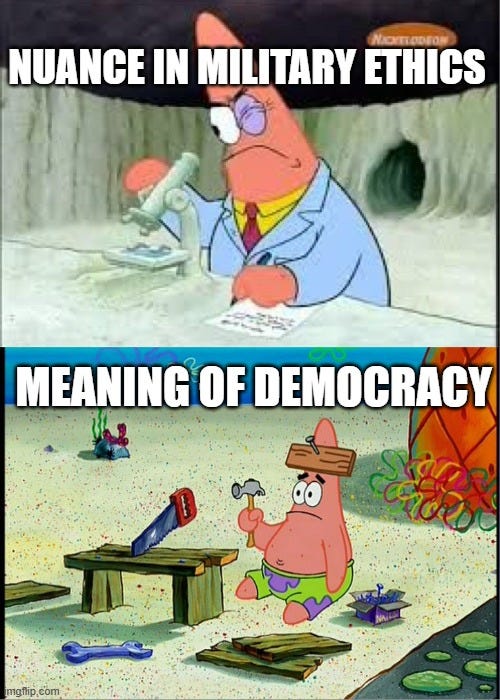

Besides the obvious problems with an authoritarian government trying to seize near total control of of arguably the most dangerous technology in the world, I'm also worried that Hegseth's completely ham-fisted approach is burying the ability of the left to engage with the actual arguments the right is making, because those arguments are being used to justify actions that are transparently terrible.

I think there's a lot to be said in the "who decides?" debate, but when the right's argument leads to "therefore, Hegseth should have access to mass surveillance tools and autonomous killbots and be able force any company to comply with him," a lot of people will immediately assume the entire argument is bad without considering the individual points.

There's obviously a lot of merit to the idea that elected officials should have the power instead of unelected tech executives, but as this post touches on, there's a incredible amount of subtlety and nuance there (even the basic framing of the problem is just insanely misleading) and people are REALLY BAD at nuance. Once things get politicized like this, people's tendency for nuance gets even worse, and if there's one thing we should be trying to think about rationally right now its how the government and regulations should interact with AI.

> Because this is my personal hell, the tech right—from Peter Theil to Elon Musk to Marc Andreessen to David Sacks to Neil Chilson—still calls itself libertarian, even as they lapdog for an authoritarian regime. Nothing unites today’s Silicon Valley libertarians more than their hunger for unchecked political power.

Yeesh. It seems like the greediest and most selfish of capitalists tend to call themselves "libertarian," not out of principle or respect for individual rights, but because they think libertarian countries (and governments) will be less likely to stop them from gaining infinite power and money. I guess they support their individual right to do whatever they want, but that surely cannot be what principled libertarians actually believe in.

I imagine actual political conservatives are feeling much the same about the radical changes to our republic that are being brought about by the so-called "conservative" party.